In this tutorial, you’ll learn how to use Python and Pandas to read Excel files using the Pandas read_excel function. Excel files are everywhere – and while they may not be the ideal data type for many data scientists, knowing how to work with them is an essential skill.

By the end of this tutorial, you’ll have learned:

- How to use the Pandas read_excel function to read an Excel file

- How to read specify an Excel sheet name to read into Pandas

- How to read multiple Excel sheets or files

- How to certain columns from an Excel file in Pandas

- How to skip rows when reading Excel files in Pandas

- And more

Let’s get started!

The Quick Answer: Use Pandas read_excel to Read Excel Files

To read Excel files in Python’s Pandas, use the read_excel() function. You can specify the path to the file and a sheet name to read, as shown below:

# Reading an Excel File in Pandas

import pandas as pd

df = pd.read_excel('/Users/datagy/Desktop/Sales.xlsx')

# With a Sheet Name

df = pd.read_excel(

io='/Users/datagy/Desktop/Sales.xlsx'

sheet_name ='North'

)In the following sections of this tutorial, you’ll learn more about the Pandas read_excel() function to better understand how to customize reading Excel files.

Understanding the Pandas read_excel Function

The Pandas read_excel() function has a ton of different parameters. In this tutorial, you’ll learn how to use the main parameters available to you that provide incredible flexibility in terms of how you read Excel files in Pandas.

| Parameter | Description | Available Option |

|---|---|---|

io= |

The string path to the workbook. | URL to file, path to file, etc. |

sheet_name= |

The name of the sheet to read. Will default to the first sheet in the workbook (position 0). | Can read either strings (for the sheet name), integers (for position), or lists (for multiple sheets) |

usecols= |

The columns to read, if not all columns are to be read | Can be strings of columns, Excel-style columns (“A:C”), or integers representing positions columns |

dtype= |

The datatypes to use for each column | Dictionary with columns as keys and data types as values |

skiprows= |

The number of rows to skip from the top | Integer value representing the number of rows to skip |

nrows= |

The number of rows to parse | Integer value representing the number of rows to read |

.read_excel() functionThe table above highlights some of the key parameters available in the Pandas .read_excel() function. The full list can be found in the official documentation. In the following sections, you’ll learn how to use the parameters shown above to read Excel files in different ways using Python and Pandas.

As shown above, the easiest way to read an Excel file using Pandas is by simply passing in the filepath to the Excel file. The io= parameter is the first parameter, so you can simply pass in the string to the file.

The parameter accepts both a path to a file, an HTTP path, an FTP path or more. Let’s see what happens when we read in an Excel file hosted on my Github page.

# Reading an Excel file in Pandas

import pandas as pd

df = pd.read_excel('https://github.com/datagy/mediumdata/raw/master/Sales.xlsx')

print(df.head())

# Returns:

# Date Customer Sales

# 0 2022-04-01 A 191

# 1 2022-04-02 B 727

# 2 2022-04-03 A 782

# 3 2022-04-04 B 561

# 4 2022-04-05 A 969If you’ve downloaded the file and taken a look at it, you’ll notice that the file has three sheets? So, how does Pandas know which sheet to load? By default, Pandas will use the first sheet (positionally), unless otherwise specified.

In the following section, you’ll learn how to specify which sheet you want to load into a DataFrame.

How to Specify Excel Sheet Names in Pandas read_excel

As shown in the previous section, you learned that when no sheet is specified, Pandas will load the first sheet in an Excel workbook. In the workbook provided, there are three sheets in the following structure:

Sales.xlsx

|---East

|---West

|---NorthBecause of this, we know that the data from the sheet “East” was loaded. If we wanted to load the data from the sheet “West”, we can use the sheet_name= parameter to specify which sheet we want to load.

The parameter accepts both a string as well as an integer. If we were to pass in a string, we can specify the sheet name that we want to load.

Let’s take a look at how we can specify the sheet name for 'West':

# Specifying an Excel Sheet to Load by Name

import pandas as pd

df = pd.read_excel(

io='https://github.com/datagy/mediumdata/raw/master/Sales.xlsx',

sheet_name='West')

print(df.head())

# Returns:

# Date Customer Sales

# 0 2022-04-01 A 504

# 1 2022-04-02 B 361

# 2 2022-04-03 A 694

# 3 2022-04-04 B 702

# 4 2022-04-05 A 255Similarly, we can load a sheet name by its position. By default, Pandas will use the position of 0, which will load the first sheet. Say we wanted to repeat our earlier example and load the data from the sheet named 'West', we would need to know where the sheet is located.

Because we know the sheet is the second sheet, we can pass in the 1st index:

# Specifying an Excel Sheet to Load by Position

import pandas as pd

df = pd.read_excel(

io='https://github.com/datagy/mediumdata/raw/master/Sales.xlsx',

sheet_name=1)

print(df.head())

# Returns:

# Date Customer Sales

# 0 2022-04-01 A 504

# 1 2022-04-02 B 361

# 2 2022-04-03 A 694

# 3 2022-04-04 B 702

# 4 2022-04-05 A 255We can see that both of these methods returned the same sheet’s data. In the following section, you’ll learn how to specify which columns to load when using the Pandas read_excel function.

How to Specify Columns Names in Pandas read_excel

There may be many times when you don’t want to load every column in an Excel file. This may be because the file has too many columns or has different columns for different worksheets.

In order to do this, we can use the usecols= parameter. It’s a very flexible parameter that lets you specify:

- A list of column names,

- A string of Excel column ranges,

- A list of integers specifying the column indices to load

Most commonly, you’ll encounter people using a list of column names to read in. Each of these columns are comma separated strings, contained in a list.

Let’s load our DataFrame from the example above, only this time only loading the 'Customer' and 'Sales' columns:

# Specifying Columns to Load by Name

import pandas as pd

df = pd.read_excel(

io='https://github.com/datagy/mediumdata/raw/master/Sales.xlsx',

usecols=['Customer', 'Sales'])

print(df.head())

# Returns:

# Customer Sales

# 0 A 191

# 1 B 727

# 2 A 782

# 3 B 561

# 4 A 969We can see that by passing in the list of strings representing the columns, we were able to parse those columns only.

If we wanted to use Excel changes, we could also specify columns 'B:C'. Let’s see what this looks like below:

# Specifying Columns to Load by Excel Range

import pandas as pd

df = pd.read_excel(

io='https://github.com/datagy/mediumdata/raw/master/Sales.xlsx',

usecols='B:C')

print(df.head())

# Returns:

# Customer Sales

# 0 A 191

# 1 B 727

# 2 A 782

# 3 B 561

# 4 A 969Finally, we can also pass in a list of integers that represent the positions of the columns we wanted to load. Because the columns are the second and third columns, we would load a list of integers as shown below:

# Specifying Columns to Load by Their Position

import pandas as pd

df = pd.read_excel(

io='https://github.com/datagy/mediumdata/raw/master/Sales.xlsx',

usecols=[1,2])

print(df.head())

# Returns:

# Customer Sales

# 0 A 191

# 1 B 727

# 2 A 782

# 3 B 561

# 4 A 969In the following section, you’ll learn how to specify data types when reading Excel files.

How to Specify Data Types in Pandas read_excel

Pandas makes it easy to specify the data type of different columns when reading an Excel file. This serves three main purposes:

- Preventing data from being read incorrectly

- Speeding up the read operation

- Saving memory

You can pass in a dictionary where the keys are the columns and the values are the data types. This ensures that data are ready correctly. Let’s see how we can specify the data types for our columns.

# Specifying Data Types for Columns When Reading Excel Files

import pandas as pd

df = pd.read_excel(

io='https://github.com/datagy/mediumdata/raw/master/Sales.xlsx',

dtype={'date':'datetime64', 'Customer': 'object', 'Sales':'int'})

print(df.head())

# Returns:

# Customer Sales

# Date Customer Sales

# 0 2022-04-01 A 191

# 1 2022-04-02 B 727

# 2 2022-04-03 A 782

# 3 2022-04-04 B 561

# 4 2022-04-05 A 969It’s important to note that you don’t need to pass in all the columns for this to work. In the next section, you’ll learn how to skip rows when reading Excel files.

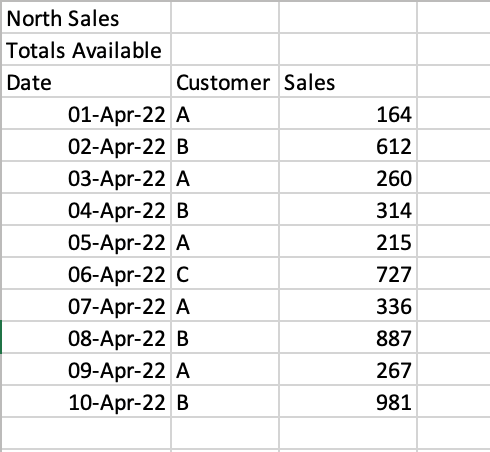

How to Skip Rows When Reading Excel Files in Pandas

In some cases, you’ll encounter files where there are formatted title rows in your Excel file, as shown below:

If we were to read the sheet 'North', we would get the following returned:

# Reading a poorly formatted Excel file

import pandas as pd

df = pd.read_excel(

io='https://github.com/datagy/mediumdata/raw/master/Sales.xlsx',

sheet_name='North')

print(df.head())

# Returns:

# North Sales Unnamed: 1 Unnamed: 2

# 0 Totals Available NaN NaN

# 1 Date Customer Sales

# 2 2022-04-01 00:00:00 A 164

# 3 2022-04-02 00:00:00 B 612

# 4 2022-04-03 00:00:00 A 260Pandas makes it easy to skip a certain number of rows when reading an Excel file. This can be done using the skiprows= parameter. We can see that we need to skip two rows, so we can simply pass in the value 2, as shown below:

# Reading a Poorly Formatted File Correctly

import pandas as pd

df = pd.read_excel(

io='https://github.com/datagy/mediumdata/raw/master/Sales.xlsx',

sheet_name='North',

skiprows=2)

print(df.head())

# Returns:

# Date Customer Sales

# 0 2022-04-01 A 164

# 1 2022-04-02 B 612

# 2 2022-04-03 A 260

# 3 2022-04-04 B 314

# 4 2022-04-05 A 215This read the file much more accurately! It can be a lifesaver when working with poorly formatted files. In the next section, you’ll learn how to read multiple sheets in an Excel file in Pandas.

How to Read Multiple Sheets in an Excel File in Pandas

Pandas makes it very easy to read multiple sheets at the same time. This can be done using the sheet_name= parameter. In our earlier examples, we passed in only a single string to read a single sheet. However, you can also pass in a list of sheets to read multiple sheets at once.

Let’s see how we can read our first two sheets:

# Reading Multiple Excel Sheets at Once in Pandas

import pandas as pd

dfs = pd.read_excel(

io='https://github.com/datagy/mediumdata/raw/master/Sales.xlsx',

sheet_name=['East', 'West'])

print(type(dfs))

# Returns: <class 'dict'>In the example above, we passed in a list of sheets to read. When we used the type() function to check the type of the returned value, we saw that a dictionary was returned.

Each of the sheets is a key of the dictionary with the DataFrame being the corresponding key’s value. Let’s see how we can access the 'West' DataFrame:

# Reading Multiple Excel Sheets in Pandas

import pandas as pd

dfs = pd.read_excel(

io='https://github.com/datagy/mediumdata/raw/master/Sales.xlsx',

sheet_name=['East', 'West'])

print(dfs.get('West').head())

# Returns:

# Date Customer Sales

# 0 2022-04-01 A 504

# 1 2022-04-02 B 361

# 2 2022-04-03 A 694

# 3 2022-04-04 B 702

# 4 2022-04-05 A 255You can also read all of the sheets at once by specifying None for the value of sheet_name=. Similarly, this returns a dictionary of all sheets:

# Reading Multiple Excel Sheets in Pandas

import pandas as pd

dfs = pd.read_excel(

io='https://github.com/datagy/mediumdata/raw/master/Sales.xlsx',

sheet_name=None)In the next section, you’ll learn how to read multiple Excel files in Pandas.

How to Read Only n Lines When Reading Excel Files in Pandas

When working with very large Excel files, it can be helpful to only sample a small subset of the data first. This allows you to quickly load the file to better be able to explore the different columns and data types.

This can be done using the nrows= parameter, which accepts an integer value of the number of rows you want to read into your DataFrame. Let’s see how we can read the first five rows of the Excel sheet:

# Reading n Number of Rows of an Excel Sheet

import pandas as pd

df = pd.read_excel(

io='https://github.com/datagy/mediumdata/raw/master/Sales.xlsx',

nrows=5)

print(df)

# Returns:

# Date Customer Sales

# 0 2022-04-01 A 191

# 1 2022-04-02 B 727

# 2 2022-04-03 A 782

# 3 2022-04-04 B 561

# 4 2022-04-05 A 969Conclusion

In this tutorial, you learned how to use Python and Pandas to read Excel files into a DataFrame using the .read_excel() function. You learned how to use the function to read an Excel, specify sheet names, read only particular columns, and specify data types. You then learned how skip rows, read only a set number of rows, and read multiple sheets.

Additional Resources

To learn more about related topics, check out the tutorials below:

- Pandas Dataframe to CSV File – Export Using .to_csv()

- Combine Data in Pandas with merge, join, and concat

- Introduction to Pandas for Data Science

- Summarizing and Analyzing a Pandas DataFrame

You can use the following basic syntax to set the column names of a DataFrame when importing an Excel file into pandas:

colnames = ['col1', 'col2', 'col3'] df = pd.read_excel('my_data.xlsx', names=colnames)

The names argument takes a list of names that you’d like to use for the columns in the DataFrame.

By using this argument, you also tell pandas to use the first row in the Excel file as the first row in the DataFrame instead of using it as the header row.

The following example shows how to use this syntax in practice.

Suppose we have the following Excel file called players_data.xlsx:

From the file we can see that the first row does not contain any column names.

If we import the Excel file using the read_excel() function, pandas will attempt to use the values in the first row as the column names for the DataFrame:

import pandas as pd #import Excel file df = pd.read_excel('players_data.xlsx') #view resulting DataFrame print(df) A 22 10 0 B 14 9 1 C 29 6 2 D 30 2 3 E 22 9 4 F 31 10

However, we can use the names argument to specify our own column names when importing the Excel file:

import pandas as pd #specify column names colnames = ['team', 'points', 'rebounds'] #import Excel file and use specified column names df = pd.read_excel('players_data.xlsx', names=colnames) #view resulting DataFrame print(df) team points rebounds 0 B 14 9 1 C 29 6 2 D 30 2 3 E 22 9 4 F 31 10

Notice that the first row in the Excel file is no longer used as the header row.

Instead, the column names that we specified using the names argument are now used as the column names.

Note: You can find the complete documentation for the pandas read_excel() function here.

Additional Resources

The following tutorials explain how to perform other common tasks in pandas:

Pandas: How to Read Excel File with Merged Cells

Pandas: Skip Specific Columns when Importing Excel File

Pandas: How to Specify dtypes when Importing Excel File

Pandas: How to Skip Rows when Reading Excel File

pandas.read_excel() function is used to read excel sheet with extension xlsx into pandas DataFrame. By reading a single sheet it returns a pandas DataFrame object, but reading two sheets it returns a Dict of DataFrame.

pandas Read Excel Key Points

- This supports to read files with extension xls, xlsx, xlsm, xlsb, odf, ods and odt

- Can load excel files stored in a local filesystem or from an URL.

- For URL, it supports http, ftp, s3, and file.

- Also supports reading from a single sheet or a list of sheets.

- When reading a two sheets, it returns a Dict of DataFrame.

Table of contents –

- Read Excel Sheet into DataFrame

- Read by Ignoring Column Names

- Set Column from Excel as Index

- Read Excel by Sheet Name

- Read Two Sheets

- Skip Columns From Excel

- Skip Rows From Excel

- Other Important Params

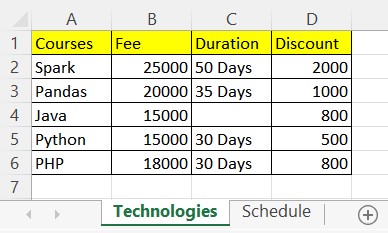

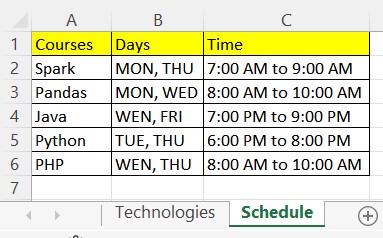

I have an excel file with two sheets named Technologies and Schedule, I will be using this to demonstrate how to read into pandas DataFrame.

Notice that on our excel file the top row contains the header of the table which can be used as column names on DataFrame.

1. pandas Read Excel Sheet

Use pandas.read_excel() function to read excel sheet into pandas DataFrame, by default it loads the first sheet from the excel file and parses the first row as a DataFrame column name. Excel file has an extension .xlsx. This function also supports several extensions xls, xlsx, xlsm, xlsb, odf, ods and odt .

Following are some of the features supported by read_excel() with optional param.

- Reading excel file from URL, S3, and from local file ad supports several extensions.

- Ignoreing the column names and provides an option to set column names.

- Setting column as Index

- Considering multiple values as NaN

- Decimal points to use for numbers

- Data types for each column

- Skipping rows and columns

I will cover how to use some of these optional params with examples, first let’s see how to read an excel sheet & create a DataFrame without any params.

import pandas as pd

# Read Excel file

df = pd.read_excel('c:/apps/courses_schedule.xlsx')

print(df)

# Outputs

# Courses Fee Duration Discount

#0 Spark 25000 50 Days 2000

#1 Pandas 20000 35 Days 1000

#2 Java 15000 NaN 800

#3 Python 15000 30 Days 500

#4 PHP 18000 30 Days 800

Related: pandas Write to Excel Sheet

By default, it considers the first row from excel as a header and used it as DataFrame column names. In case you wanted to consider the first row from excel as a data record use header=None param and use names param to specify the column names. Not specifying names result in column names with numerical numbers.

# Read excel by considering first row as data

columns = ['courses','course_fee','course_duration','course_discount']

df2 = pd.read_excel('c:/apps/courses_schedule.xlsx',

header=None, names = columns)

print(df2)

# Outputs

#0 courses course_fee Duration Discount

#1 Spark 25000 50 Days 2000

#2 Pandas 20000 35 Days 1000

#3 Java 15000 NaN 800

#4 Python 15000 30 Days 500

#5 PHP 18000 30 Days 800

3. Set Column from Excel as Index

If you notice, the DataFrame was created with the default index, if you wanted to set the column name as index use index_col param. This param takes values {int, list of int, default None}. If a list is passed with header positions, it creates a MultiIndex.

By default, it is set to None meaning not column is set as an index.

# Read excel by setting column as index

df2 = pd.read_excel('c:/apps/courses_schedule.xlsx',

index_col=0)

print(df2)

# Outputs

# Fee Duration Discount

#Courses

#Spark 25000 50 Days 2000

#Pandas 20000 35 Days 1000

#Java 15000 NaN 800

#Python 15000 30 Days 500

#PHP 18000 30 Days 800

4. Read Excel by Sheet Name

As I said in the above section by default pandas read the first sheet from the excel file and provide a sheet_name param to read a specific sheet by name. This param takes {str, int, list, or None} as values. This is also used to load a sheet by position.

By default, it is set to 0 meaning load the first sheet.

# Read specific excel sheet

df = pd.read_excel('records.xlsx', sheet_name='Sheet1')

print(df)

5. Read Two Sheets

sheet_name param also takes a list of sheet names as values that can be used to read two sheets into pandas DataFrame. Not that while reading two sheets it returns a Dict of DataFrame. The key in Dict is a sheet name and the value would be DataFrame.

Use None to load all sheets from excel and returns a Dict of Dictionary.

# Read Multiple sheets

dict_df = pd.read_excel('c:/apps/courses_schedule.xlsx',

sheet_name=['Technologies','Schedule'])

# Get DataFrame from Dict

technologies_df = dict_df .get('Technologies')

schedule_df = dict_df.get('Schedule')

# Print DataFrame's

print(technologies_df)

print(schedule_df)

I will leave this to you to execute and validate the output.

6. Skip Columns From Excel Sheet

Sometimes while reading an excel sheet into pandas DataFrame you may need to skip columns, you can do this by using usecols param. This takes values {int, str, list-like, or callable default None}. To specify the list of column names or positions use a list of strings or a list of int.

By default it is set to None meaning load all columns

# Read excel by skipping columns

df2 = pd.read_excel('c:/apps/courses_schedule.xlsx',

usecols=['Courses', 'Duration'])

print(df2)

# Outputs

# Courses Duration

#0 Spark 50 Days

#1 Pandas 35 Days

#2 Java NaN

#3 Python 30 Days

#4 PHP 30 Days

Alternatively, you can also write it by column position.

# Skip columns with list of values

df = pd.read_excel('records.xlsx', usecols=[0,2])

print(df)

Also supports a range of columns as value. For example, value ‘B:D’ means parsing B, C, and D columns.

# Skip columns by range

df2 = pd.read_excel('c:/apps/courses_schedule.xlsx',

usecols='B:D')

print(df2)

Fee Duration Discount

0 25000 50 Days 2000

1 20000 35 Days 1000

2 15000 NaN 800

3 15000 30 Days 500

4 18000 30 Days 800

7. Skip Rows from Excel Sheet

Use skiprows param to skip rows from the excel file, this param takes values {list-like, int, or callable, optional}. With this, you can skip the first few rows, selected rows, and range of rows. The below example skips the first 3 rows and considers the 4th row from excel as the header.

# Read excel file by skipping rows

df2 = pd.read_excel('c:/apps/courses_schedule.xlsx',

skiprows=2)

print(df2)

Pandas 20000 35 Days 1000

0 Java 15000 NaN 800

1 Python 15000 30 Days 500

2 PHP 18000 30 Days 800

Use header=None to consider the 4th row as data. you can also use a list of rows to skip.

# Using skiprows to skip rows

df2 = pd.read_excel('c:/apps/courses_schedule.xlsx',

skiprows=[1,3])

print(df2)

Courses Fee Duration Discount

0 Pandas 20000 35 Days 1000

1 Python 15000 30 Days 500

2 PHP 18000 30 Days 800

By using a lambda expression.

# Using skiprows with lambda

df2 = pd.read_excel('c:/apps/courses_schedule.xlsx',

skiprows=lambda x: x in [1,3])

print(df2)

8. Other Important Params

- dtype – Dict with column name an type.

- nrows – How many rows to parse.

- na_values – Additional strings to recognize as NA/NaN.

- keep_default_na – Whether or not to include the default NaN values when parsing the data.

- na_filter – Filters missing values.

- parse_dates – Specify the column index you wanted to parse as dates

- thousands – Thousands separator for parsing string columns to numeric.

- skipfooter – Specify how to rows you wanted to skip from the footer.

- mangle_dupe_cols – Duplicate columns will be specified as ‘X’, ‘X.1’, …’X.N’,

For complete params and description, refer to pandas documentation.

Conclusion

In this article, you have learned how to read an Excel sheet and covert it into DataFrame by ignoring header, skipping rows, skipping columns, specifying column names, and many more.

Happy Learning !!

Related Articles

- pandas ExcelWriter Usage with Examples

- pandas write CSV file

- Pandas Read SQL Query or Table with Examples

- Pandas Read TSV with Examples

- Pandas Read Text with Examples

- Pandas read_csv() with Examples

- Pandas Read JSON File with Examples

- How to Read CSV from String in Pandas

- Pandas Write to Excel with Examples

References

- https://docs.microsoft.com/en-us/deployoffice/compat/office-file-format-reference

- https://en.wikipedia.org/wiki/List_of_Microsoft_Office_filename_extensions

В Python данные из файла Excel считываются в объект DataFrame. Для этого используется функция read_excel() модуля pandas.

Лист Excel — это двухмерная таблица. Объект DataFrame также представляет собой двухмерную табличную структуру данных.

- Пример использования Pandas read_excel()

- Список заголовков столбцов листа Excel

- Вывод данных столбца

- Пример использования Pandas to Excel: read_excel()

- Чтение файла Excel без строки заголовка

- Лист Excel в Dict, CSV и JSON

- Ресурсы

Предположим, что у нас есть документ Excel, состоящий из двух листов: «Employees» и «Cars». Верхняя строка содержит заголовок таблицы.

Ниже приведен код, который считывает данные листа «Employees» и выводит их.

import pandas

excel_data_df = pandas.read_excel('records.xlsx', sheet_name='Employees')

# print whole sheet data

print(excel_data_df)

Вывод:

EmpID EmpName EmpRole 0 1 Pankaj CEO 1 2 David Lee Editor 2 3 Lisa Ray Author

Первый параметр, который принимает функция read_excel ()— это имя файла Excel. Второй параметр (sheet_name) определяет лист для считывания данных.

При выводе содержимого объекта DataFrame мы получаем двухмерные таблицы, схожие по своей структуре со структурой документа Excel.

Чтобы получить список заголовков столбцов таблицы, используется свойство columns объекта Dataframe. Пример реализации:

print(excel_data_df.columns.ravel())

Вывод:

['Pankaj', 'David Lee', 'Lisa Ray']

Мы можем получить данные из столбца и преобразовать их в список значений. Пример:

print(excel_data_df['EmpName'].tolist())

Вывод:

['Pankaj', 'David Lee', 'Lisa Ray']

Можно указать имена столбцов для чтения из файла Excel. Это потребуется, если нужно вывести данные из определенных столбцов таблицы.

import pandas

excel_data_df = pandas.read_excel('records.xlsx', sheet_name='Cars', usecols=['Car Name', 'Car Price'])

print(excel_data_df)

Вывод:

Car Name Car Price 0 Honda City 20,000 USD 1 Bugatti Chiron 3 Million USD 2 Ferrari 458 2,30,000 USD

Если в листе Excel нет строки заголовка, нужно передать его значение как None.

excel_data_df = pandas.read_excel('records.xlsx', sheet_name='Numbers', header=None)

Если вы передадите значение заголовка как целое число (например, 3), тогда третья строка станет им. При этом считывание данных начнется со следующей строки. Данные, расположенные перед строкой заголовка, будут отброшены.

Объект DataFrame предоставляет различные методы для преобразования табличных данных в формат Dict , CSV или JSON.

excel_data_df = pandas.read_excel('records.xlsx', sheet_name='Cars', usecols=['Car Name', 'Car Price'])

print('Excel Sheet to Dict:', excel_data_df.to_dict(orient='record'))

print('Excel Sheet to JSON:', excel_data_df.to_json(orient='records'))

print('Excel Sheet to CSV:n', excel_data_df.to_csv(index=False))

Вывод:

Excel Sheet to Dict: [{'Car Name': 'Honda City', 'Car Price': '20,000 USD'}, {'Car Name': 'Bugatti Chiron', 'Car Price': '3 Million USD'}, {'Car Name': 'Ferrari 458', 'Car Price': '2,30,000 USD'}]

Excel Sheet to JSON: [{"Car Name":"Honda City","Car Price":"20,000 USD"},{"Car Name":"Bugatti Chiron","Car Price":"3 Million USD"},{"Car Name":"Ferrari 458","Car Price":"2,30,000 USD"}]

Excel Sheet to CSV:

Car Name,Car Price

Honda City,"20,000 USD"

Bugatti Chiron,3 Million USD

Ferrari 458,"2,30,000 USD"

- Документы API pandas read_excel()

Дайте знать, что вы думаете по этой теме материала в комментариях. Мы крайне благодарны вам за ваши комментарии, дизлайки, подписки, лайки, отклики!

The read_excel() method can read Excel 2003 (.xls) and

Excel 2007+ (.xlsx) files using the xlrd Python

module. The to_excel() instance method is used for

saving a DataFrame to Excel. Generally the semantics are

similar to working with csv data. See the cookbook for some

advanced strategies

10.5.1 Reading Excel Files

In the most basic use-case, read_excel takes a path to an Excel

file, and the sheetname indicating which sheet to parse.

# Returns a DataFrame read_excel('path_to_file.xls', sheetname='Sheet1')

10.5.1.1 ExcelFile class

To facilitate working with multiple sheets from the same file, the ExcelFile

class can be used to wrap the file and can be be passed into read_excel

There will be a performance benefit for reading multiple sheets as the file is

read into memory only once.

xlsx = pd.ExcelFile('path_to_file.xls) df = pd.read_excel(xlsx, 'Sheet1')

The ExcelFile class can also be used as a context manager.

with pd.ExcelFile('path_to_file.xls') as xls: df1 = pd.read_excel(xls, 'Sheet1') df2 = pd.read_excel(xls, 'Sheet2')

The sheet_names property will generate

a list of the sheet names in the file.

The primary use-case for an ExcelFile is parsing multiple sheets with

different parameters

data = {} # For when Sheet1's format differs from Sheet2 with pd.ExcelFile('path_to_file.xls') as xls: data['Sheet1'] = pd.read_excel(xls, 'Sheet1', index_col=None, na_values=['NA']) data['Sheet2'] = pd.read_excel(xls, 'Sheet2', index_col=1)

Note that if the same parsing parameters are used for all sheets, a list

of sheet names can simply be passed to read_excel with no loss in performance.

# using the ExcelFile class data = {} with pd.ExcelFile('path_to_file.xls') as xls: data['Sheet1'] = read_excel(xls, 'Sheet1', index_col=None, na_values=['NA']) data['Sheet2'] = read_excel(xls, 'Sheet2', index_col=None, na_values=['NA']) # equivalent using the read_excel function data = read_excel('path_to_file.xls', ['Sheet1', 'Sheet2'], index_col=None, na_values=['NA'])

New in version 0.12.

ExcelFile has been moved to the top level namespace.

New in version 0.17.

read_excel can take an ExcelFile object as input

10.5.1.2 Specifying Sheets

Note

The second argument is sheetname, not to be confused with ExcelFile.sheet_names

Note

An ExcelFile’s attribute sheet_names provides access to a list of sheets.

- The arguments

sheetnameallows specifying the sheet or sheets to read. - The default value for

sheetnameis 0, indicating to read the first sheet - Pass a string to refer to the name of a particular sheet in the workbook.

- Pass an integer to refer to the index of a sheet. Indices follow Python

convention, beginning at 0. - Pass a list of either strings or integers, to return a dictionary of specified sheets.

- Pass a

Noneto return a dictionary of all available sheets.

# Returns a DataFrame read_excel('path_to_file.xls', 'Sheet1', index_col=None, na_values=['NA'])

Using the sheet index:

# Returns a DataFrame read_excel('path_to_file.xls', 0, index_col=None, na_values=['NA'])

Using all default values:

# Returns a DataFrame read_excel('path_to_file.xls')

Using None to get all sheets:

# Returns a dictionary of DataFrames read_excel('path_to_file.xls',sheetname=None)

Using a list to get multiple sheets:

# Returns the 1st and 4th sheet, as a dictionary of DataFrames. read_excel('path_to_file.xls',sheetname=['Sheet1',3])

New in version 0.16.

read_excel can read more than one sheet, by setting sheetname to either

a list of sheet names, a list of sheet positions, or None to read all sheets.

New in version 0.13.

Sheets can be specified by sheet index or sheet name, using an integer or string,

respectively.

10.5.1.3 Reading a MultiIndex

New in version 0.17.

read_excel can read a MultiIndex index, by passing a list of columns to index_col

and a MultiIndex column by passing a list of rows to header. If either the index

or columns have serialized level names those will be read in as well by specifying

the rows/columns that make up the levels.

For example, to read in a MultiIndex index without names:

In [1]: df = pd.DataFrame({'a':[1,2,3,4], 'b':[5,6,7,8]}, ...: index=pd.MultiIndex.from_product([['a','b'],['c','d']])) ...: In [2]: df.to_excel('path_to_file.xlsx') In [3]: df = pd.read_excel('path_to_file.xlsx', index_col=[0,1]) In [4]: df Out[4]: a b a c 1 5 d 2 6 b c 3 7 d 4 8

If the index has level names, they will parsed as well, using the same

parameters.

In [5]: df.index = df.index.set_names(['lvl1', 'lvl2']) In [6]: df.to_excel('path_to_file.xlsx') In [7]: df = pd.read_excel('path_to_file.xlsx', index_col=[0,1]) In [8]: df Out[8]: a b lvl1 lvl2 a c 1 5 d 2 6 b c 3 7 d 4 8

If the source file has both MultiIndex index and columns, lists specifying each

should be passed to index_col and header

In [9]: df.columns = pd.MultiIndex.from_product([['a'],['b', 'd']], names=['c1', 'c2']) In [10]: df.to_excel('path_to_file.xlsx') In [11]: df = pd.read_excel('path_to_file.xlsx', ....: index_col=[0,1], header=[0,1]) ....: In [12]: df Out[12]: c1 a c2 b d lvl1 lvl2 a c 1 5 d 2 6 b c 3 7 d 4 8

Warning

Excel files saved in version 0.16.2 or prior that had index names will still able to be read in,

but the has_index_names argument must specified to True.

10.5.1.4 Parsing Specific Columns

It is often the case that users will insert columns to do temporary computations

in Excel and you may not want to read in those columns. read_excel takes

a parse_cols keyword to allow you to specify a subset of columns to parse.

If parse_cols is an integer, then it is assumed to indicate the last column

to be parsed.

read_excel('path_to_file.xls', 'Sheet1', parse_cols=2)

If parse_cols is a list of integers, then it is assumed to be the file column

indices to be parsed.

read_excel('path_to_file.xls', 'Sheet1', parse_cols=[0, 2, 3])

10.5.1.5 Cell Converters

It is possible to transform the contents of Excel cells via the converters

option. For instance, to convert a column to boolean:

read_excel('path_to_file.xls', 'Sheet1', converters={'MyBools': bool})

This options handles missing values and treats exceptions in the converters

as missing data. Transformations are applied cell by cell rather than to the

column as a whole, so the array dtype is not guaranteed. For instance, a

column of integers with missing values cannot be transformed to an array

with integer dtype, because NaN is strictly a float. You can manually mask

missing data to recover integer dtype:

cfun = lambda x: int(x) if x else -1 read_excel('path_to_file.xls', 'Sheet1', converters={'MyInts': cfun})

10.5.2 Writing Excel Files

10.5.2.1 Writing Excel Files to Disk

To write a DataFrame object to a sheet of an Excel file, you can use the

to_excel instance method. The arguments are largely the same as to_csv

described above, the first argument being the name of the excel file, and the

optional second argument the name of the sheet to which the DataFrame should be

written. For example:

df.to_excel('path_to_file.xlsx', sheet_name='Sheet1')

Files with a .xls extension will be written using xlwt and those with a

.xlsx extension will be written using xlsxwriter (if available) or

openpyxl.

The DataFrame will be written in a way that tries to mimic the REPL output. One

difference from 0.12.0 is that the index_label will be placed in the second

row instead of the first. You can get the previous behaviour by setting the

merge_cells option in to_excel() to False:

df.to_excel('path_to_file.xlsx', index_label='label', merge_cells=False)

The Panel class also has a to_excel instance method,

which writes each DataFrame in the Panel to a separate sheet.

In order to write separate DataFrames to separate sheets in a single Excel file,

one can pass an ExcelWriter.

with ExcelWriter('path_to_file.xlsx') as writer: df1.to_excel(writer, sheet_name='Sheet1') df2.to_excel(writer, sheet_name='Sheet2')

Note

Wringing a little more performance out of read_excel

Internally, Excel stores all numeric data as floats. Because this can

produce unexpected behavior when reading in data, pandas defaults to trying

to convert integers to floats if it doesn’t lose information (1.0 -->). You can pass

1convert_float=False to disable this behavior, which

may give a slight performance improvement.

10.5.2.2 Writing Excel Files to Memory

New in version 0.17.

Pandas supports writing Excel files to buffer-like objects such as StringIO or

BytesIO using ExcelWriter.

New in version 0.17.

Added support for Openpyxl >= 2.2

# Safe import for either Python 2.x or 3.x try: from io import BytesIO except ImportError: from cStringIO import StringIO as BytesIO bio = BytesIO() # By setting the 'engine' in the ExcelWriter constructor. writer = ExcelWriter(bio, engine='xlsxwriter') df.to_excel(writer, sheet_name='Sheet1') # Save the workbook writer.save() # Seek to the beginning and read to copy the workbook to a variable in memory bio.seek(0) workbook = bio.read()

Note

engine is optional but recommended. Setting the engine determines

the version of workbook produced. Setting engine='xlrd' will produce an

Excel 2003-format workbook (xls). Using either 'openpyxl' or

'xlsxwriter' will produce an Excel 2007-format workbook (xlsx). If

omitted, an Excel 2007-formatted workbook is produced.

10.5.3 Excel writer engines

New in version 0.13.

pandas chooses an Excel writer via two methods:

- the

enginekeyword argument - the filename extension (via the default specified in config options)

By default, pandas uses the XlsxWriter for .xlsx and openpyxl

for .xlsm files and xlwt for .xls files. If you have multiple

engines installed, you can set the default engine through setting the

config options io.excel.xlsx.writer and

io.excel.xls.writer. pandas will fall back on openpyxl for .xlsx

files if Xlsxwriter is not available.

To specify which writer you want to use, you can pass an engine keyword

argument to to_excel and to ExcelWriter. The built-in engines are:

openpyxl: This includes stable support for Openpyxl from 1.6.1. However,

it is advised to use version 2.2 and higher, especially when working with

styles.xlsxwriterxlwt

# By setting the 'engine' in the DataFrame and Panel 'to_excel()' methods. df.to_excel('path_to_file.xlsx', sheet_name='Sheet1', engine='xlsxwriter') # By setting the 'engine' in the ExcelWriter constructor. writer = ExcelWriter('path_to_file.xlsx', engine='xlsxwriter') # Or via pandas configuration. from pandas import options options.io.excel.xlsx.writer = 'xlsxwriter' df.to_excel('path_to_file.xlsx', sheet_name='Sheet1')

Why learn to work with Excel with Python? Excel is one of the most popular and widely-used data tools; it’s hard to find an organization that doesn’t work with it in some way. From analysts, to sales VPs, to CEOs, various professionals use Excel for both quick stats and serious data crunching.

With Excel being so pervasive, data professionals must be familiar with it. Working with data in Python or R offers serious advantages over Excel’s UI, so finding a way to work with Excel using code is critical. Thankfully, there’s a great tool already out there for using Excel with Python called pandas.

Pandas has excellent methods for reading all kinds of data from Excel files. You can also export your results from pandas back to Excel, if that’s preferred by your intended audience. Pandas is great for other routine data analysis tasks, such as:

- quick Exploratory Data Analysis (EDA)

- drawing attractive plots

- feeding data into machine learning tools like scikit-learn

- building machine learning models on your data

- taking cleaned and processed data to any number of data tools

Pandas is better at automating data processing tasks than Excel, including processing Excel files.

In this tutorial, we are going to show you how to work with Excel files in pandas. We will cover the following concepts.

- setting up your computer with the necessary software

- reading in data from Excel files into pandas

- data exploration in pandas

- visualizing data in pandas using the matplotlib visualization library

- manipulating and reshaping data in pandas

- moving data from pandas into Excel

Note that this tutorial does not provide a deep dive into pandas. To explore pandas more, check out our course.

System Prerequisites

We will use Python 3 and Jupyter Notebook to demonstrate the code in this tutorial.In addition to Python and Jupyter Notebook, you will need the following Python modules:

- matplotlib — data visualization

- NumPy — numerical data functionality

- OpenPyXL — read/write Excel 2010 xlsx/xlsm files

- pandas — data import, clean-up, exploration, and analysis

- xlrd — read Excel data

- xlwt — write to Excel

- XlsxWriter — write to Excel (xlsx) files

There are multiple ways to get set up with all the modules. We cover three of the most common scenarios below.

- If you have Python installed via Anaconda package manager, you can install the required modules using the command

conda install. For example, to install pandas, you would execute the command —conda install pandas. - If you already have a regular, non-Anaconda Python installed on the computer, you can install the required modules using

pip. Open your command line program and execute commandpip install <module name>to install a module. You should replace<module name>with the actual name of the module you are trying to install. For example, to install pandas, you would execute command —pip install pandas. - If you don’t have Python already installed, you should get it through the Anaconda package manager. Anaconda provides installers for Windows, Mac, and Linux Computers. If you choose the full installer, you will get all the modules you need, along with Python and pandas within a single package. This is the easiest and fastest way to get started.

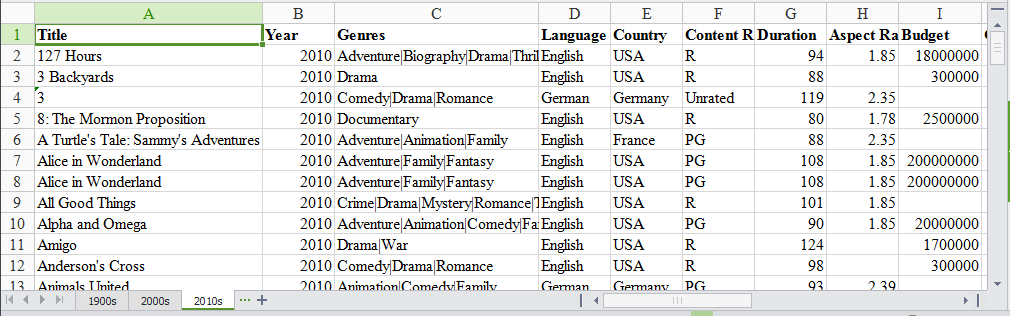

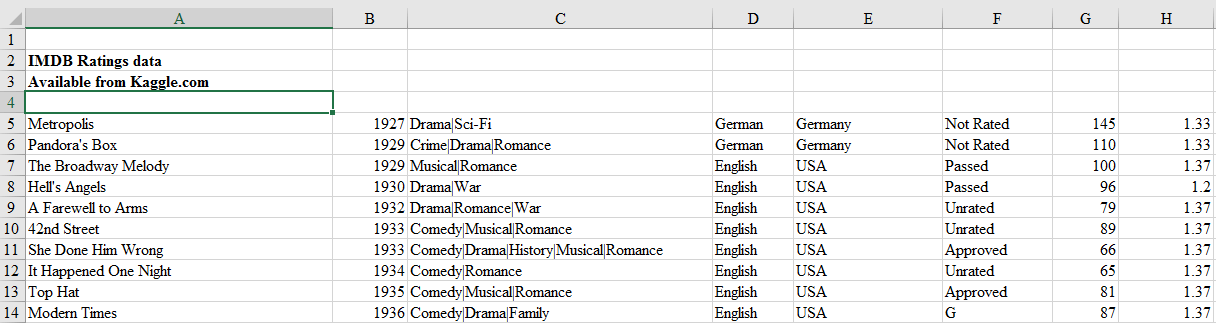

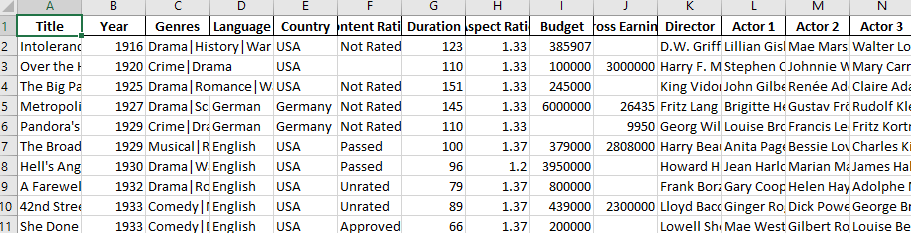

The Data Set

In this tutorial, we will use a multi-sheet Excel file we created from Kaggle’s IMDB Scores data. You can download the file here.

Our Excel file has three sheets: ‘1900s,’ ‘2000s,’ and ‘2010s.’ Each sheet has data for movies from those years.

We will use this data set to find the ratings distribution for the movies, visualize movies with highest ratings and net earnings and calculate statistical information about the movies. We will be analyzing and exploring this data using Python and pandas, thus demonstrating pandas capabilities for working with Excel data in Python.

Read data from the Excel file

We need to first import the data from the Excel file into pandas. To do that, we start by importing the pandas module.

import pandas as pdWe then use the pandas’ read_excel method to read in data from the Excel file. The easiest way to call this method is to pass the file name. If no sheet name is specified then it will read the first sheet in the index (as shown below).

excel_file = 'movies.xls'

movies = pd.read_excel(excel_file)Here, the read_excel method read the data from the Excel file into a pandas DataFrame object. Pandas defaults to storing data in DataFrames. We then stored this DataFrame into a variable called movies.

Pandas has a built-in DataFrame.head() method that we can use to easily display the first few rows of our DataFrame. If no argument is passed, it will display first five rows. If a number is passed, it will display the equal number of rows from the top.

movies.head()| Title | Year | Genres | Language | Country | Content Rating | Duration | Aspect Ratio | Budget | Gross Earnings | … | Facebook Likes — Actor 1 | Facebook Likes — Actor 2 | Facebook Likes — Actor 3 | Facebook Likes — cast Total | Facebook likes — Movie | Facenumber in posters | User Votes | Reviews by Users | Reviews by Crtiics | IMDB Score | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | Intolerance: Love’s Struggle Throughout the Ages | 1916 | Drama|History|War | NaN | USA | Not Rated | 123 | 1.33 | 385907.0 | NaN | … | 436 | 22 | 9.0 | 481 | 691 | 1 | 10718 | 88 | 69.0 | 8.0 |

| 1 | Over the Hill to the Poorhouse | 1920 | Crime|Drama | NaN | USA | NaN | 110 | 1.33 | 100000.0 | 3000000.0 | … | 2 | 2 | 0.0 | 4 | 0 | 1 | 5 | 1 | 1.0 | 4.8 |

| 2 | The Big Parade | 1925 | Drama|Romance|War | NaN | USA | Not Rated | 151 | 1.33 | 245000.0 | NaN | … | 81 | 12 | 6.0 | 108 | 226 | 0 | 4849 | 45 | 48.0 | 8.3 |

| 3 | Metropolis | 1927 | Drama|Sci-Fi | German | Germany | Not Rated | 145 | 1.33 | 6000000.0 | 26435.0 | … | 136 | 23 | 18.0 | 203 | 12000 | 1 | 111841 | 413 | 260.0 | 8.3 |

| 4 | Pandora’s Box | 1929 | Crime|Drama|Romance | German | Germany | Not Rated | 110 | 1.33 | NaN | 9950.0 | … | 426 | 20 | 3.0 | 455 | 926 | 1 | 7431 | 84 | 71.0 | 8.0 |

5 rows × 25 columns

Excel files quite often have multiple sheets and the ability to read a specific sheet or all of them is very important. To make this easy, the pandas read_excel method takes an argument called sheetname that tells pandas which sheet to read in the data from. For this, you can either use the sheet name or the sheet number. Sheet numbers start with zero. If the sheetname argument is not given, it defaults to zero and pandas will import the first sheet.

By default, pandas will automatically assign a numeric index or row label starting with zero. You may want to leave the default index as such if your data doesn’t have a column with unique values that can serve as a better index. In case there is a column that you feel would serve as a better index, you can override the default behavior by setting index_col property to a column. It takes a numeric value for setting a single column as index or a list of numeric values for creating a multi-index.

In the below code, we are choosing the first column, ‘Title’, as index (index=0) by passing zero to the index_col argument.

movies_sheet1 = pd.read_excel(excel_file, sheetname=0, index_col=0)

movies_sheet1.head()| Year | Genres | Language | Country | Content Rating | Duration | Aspect Ratio | Budget | Gross Earnings | Director | … | Facebook Likes — Actor 1 | Facebook Likes — Actor 2 | Facebook Likes — Actor 3 | Facebook Likes — cast Total | Facebook likes — Movie | Facenumber in posters | User Votes | Reviews by Users | Reviews by Crtiics | IMDB Score | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Title | |||||||||||||||||||||

| Intolerance: Love’s Struggle Throughout the Ages | 1916 | Drama|History|War | NaN | USA | Not Rated | 123 | 1.33 | 385907.0 | NaN | D.W. Griffith | … | 436 | 22 | 9.0 | 481 | 691 | 1 | 10718 | 88 | 69.0 | 8.0 |

| Over the Hill to the Poorhouse | 1920 | Crime|Drama | NaN | USA | NaN | 110 | 1.33 | 100000.0 | 3000000.0 | Harry F. Millarde | … | 2 | 2 | 0.0 | 4 | 0 | 1 | 5 | 1 | 1.0 | 4.8 |

| The Big Parade | 1925 | Drama|Romance|War | NaN | USA | Not Rated | 151 | 1.33 | 245000.0 | NaN | King Vidor | … | 81 | 12 | 6.0 | 108 | 226 | 0 | 4849 | 45 | 48.0 | 8.3 |

| Metropolis | 1927 | Drama|Sci-Fi | German | Germany | Not Rated | 145 | 1.33 | 6000000.0 | 26435.0 | Fritz Lang | … | 136 | 23 | 18.0 | 203 | 12000 | 1 | 111841 | 413 | 260.0 | 8.3 |

| Pandora’s Box | 1929 | Crime|Drama|Romance | German | Germany | Not Rated | 110 | 1.33 | NaN | 9950.0 | Georg Wilhelm Pabst | … | 426 | 20 | 3.0 | 455 | 926 | 1 | 7431 | 84 | 71.0 | 8.0 |

5 rows × 24 columns

As you noticed above, our Excel data file has three sheets. We already read the first sheet in a DataFrame above. Now, using the same syntax, we will read in rest of the two sheets too.

movies_sheet2 = pd.read_excel(excel_file, sheetname=1, index_col=0)

movies_sheet2.head()| Year | Genres | Language | Country | Content Rating | Duration | Aspect Ratio | Budget | Gross Earnings | Director | … | Facebook Likes — Actor 1 | Facebook Likes — Actor 2 | Facebook Likes — Actor 3 | Facebook Likes — cast Total | Facebook likes — Movie | Facenumber in posters | User Votes | Reviews by Users | Reviews by Crtiics | IMDB Score | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Title | |||||||||||||||||||||

| 102 Dalmatians | 2000 | Adventure|Comedy|Family | English | USA | G | 100.0 | 1.85 | 85000000.0 | 66941559.0 | Kevin Lima | … | 2000.0 | 795.0 | 439.0 | 4182 | 372 | 1 | 26413 | 77.0 | 84.0 | 4.8 |

| 28 Days | 2000 | Comedy|Drama | English | USA | PG-13 | 103.0 | 1.37 | 43000000.0 | 37035515.0 | Betty Thomas | … | 12000.0 | 10000.0 | 664.0 | 23864 | 0 | 1 | 34597 | 194.0 | 116.0 | 6.0 |

| 3 Strikes | 2000 | Comedy | English | USA | R | 82.0 | 1.85 | 6000000.0 | 9821335.0 | DJ Pooh | … | 939.0 | 706.0 | 585.0 | 3354 | 118 | 1 | 1415 | 10.0 | 22.0 | 4.0 |

| Aberdeen | 2000 | Drama | English | UK | NaN | 106.0 | 1.85 | 6500000.0 | 64148.0 | Hans Petter Moland | … | 844.0 | 2.0 | 0.0 | 846 | 260 | 0 | 2601 | 35.0 | 28.0 | 7.3 |

| All the Pretty Horses | 2000 | Drama|Romance|Western | English | USA | PG-13 | 220.0 | 2.35 | 57000000.0 | 15527125.0 | Billy Bob Thornton | … | 13000.0 | 861.0 | 820.0 | 15006 | 652 | 2 | 11388 | 183.0 | 85.0 | 5.8 |

5 rows × 24 columns

movies_sheet3 = pd.read_excel(excel_file, sheetname=2, index_col=0)

movies_sheet3.head()| Year | Genres | Language | Country | Content Rating | Duration | Aspect Ratio | Budget | Gross Earnings | Director | … | Facebook Likes — Actor 1 | Facebook Likes — Actor 2 | Facebook Likes — Actor 3 | Facebook Likes — cast Total | Facebook likes — Movie | Facenumber in posters | User Votes | Reviews by Users | Reviews by Crtiics | IMDB Score | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Title | |||||||||||||||||||||

| 127 Hours | 2010.0 | Adventure|Biography|Drama|Thriller | English | USA | R | 94.0 | 1.85 | 18000000.0 | 18329466.0 | Danny Boyle | … | 11000.0 | 642.0 | 223.0 | 11984 | 63000 | 0.0 | 279179 | 440.0 | 450.0 | 7.6 |

| 3 Backyards | 2010.0 | Drama | English | USA | R | 88.0 | NaN | 300000.0 | NaN | Eric Mendelsohn | … | 795.0 | 659.0 | 301.0 | 1884 | 92 | 0.0 | 554 | 23.0 | 20.0 | 5.2 |

| 3 | 2010.0 | Comedy|Drama|Romance | German | Germany | Unrated | 119.0 | 2.35 | NaN | 59774.0 | Tom Tykwer | … | 24.0 | 20.0 | 9.0 | 69 | 2000 | 0.0 | 4212 | 18.0 | 76.0 | 6.8 |

| 8: The Mormon Proposition | 2010.0 | Documentary | English | USA | R | 80.0 | 1.78 | 2500000.0 | 99851.0 | Reed Cowan | … | 191.0 | 12.0 | 5.0 | 210 | 0 | 0.0 | 1138 | 30.0 | 28.0 | 7.1 |

| A Turtle’s Tale: Sammy’s Adventures | 2010.0 | Adventure|Animation|Family | English | France | PG | 88.0 | 2.35 | NaN | NaN | Ben Stassen | … | 783.0 | 749.0 | 602.0 | 3874 | 0 | 2.0 | 5385 | 22.0 | 56.0 | 6.1 |

5 rows × 24 columns

Since all the three sheets have similar data but for different recordsmovies, we will create a single DataFrame from all the three DataFrames we created above. We will use the pandas concat method for this and pass in the names of the three DataFrames we just created and assign the results to a new DataFrame object, movies. By keeping the DataFrame name same as before, we are over-writing the previously created DataFrame.

movies = pd.concat([movies_sheet1, movies_sheet2, movies_sheet3])We can check if this concatenation by checking the number of rows in the combined DataFrame by calling the method shape on it that will give us the number of rows and columns.

movies.shape(5042, 24)Using the ExcelFile class to read multiple sheets

We can also use the ExcelFile class to work with multiple sheets from the same Excel file. We first wrap the Excel file using ExcelFile and then pass it to read_excel method.

xlsx = pd.ExcelFile(excel_file)

movies_sheets = []

for sheet in xlsx.sheet_names:

movies_sheets.append(xlsx.parse(sheet))

movies = pd.concat(movies_sheets)If you are reading an Excel file with a lot of sheets and are creating a lot of DataFrames, ExcelFile is more convenient and efficient in comparison to read_excel. With ExcelFile, you only need to pass the Excel file once, and then you can use it to get the DataFrames. When using read_excel, you pass the Excel file every time and hence the file is loaded again for every sheet. This can be a huge performance drag if the Excel file has many sheets with a large number of rows.

Exploring the data

Now that we have read in the movies data set from our Excel file, we can start exploring it using pandas. A pandas DataFrame stores the data in a tabular format, just like the way Excel displays the data in a sheet. Pandas has a lot of built-in methods to explore the DataFrame we created from the Excel file we just read in.

We already introduced the method head in the previous section that displays few rows from the top from the DataFrame. Let’s look at few more methods that come in handy while exploring the data set.

We can use the shape method to find out the number of rows and columns for the DataFrame.

movies.shape(5042, 25)This tells us our Excel file has 5042 records and 25 columns or observations. This can be useful in reporting the number of records and columns and comparing that with the source data set.

We can use the tail method to view the bottom rows. If no parameter is passed, only the bottom five rows are returned.

movies.tail()| Title | Year | Genres | Language | Country | Content Rating | Duration | Aspect Ratio | Budget | Gross Earnings | … | Facebook Likes — Actor 1 | Facebook Likes — Actor 2 | Facebook Likes — Actor 3 | Facebook Likes — cast Total | Facebook likes — Movie | Facenumber in posters | User Votes | Reviews by Users | Reviews by Crtiics | IMDB Score | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 1599 | War & Peace | NaN | Drama|History|Romance|War | English | UK | TV-14 | NaN | 16.00 | NaN | NaN | … | 1000.0 | 888.0 | 502.0 | 4528 | 11000 | 1.0 | 9277 | 44.0 | 10.0 | 8.2 |

| 1600 | Wings | NaN | Comedy|Drama | English | USA | NaN | 30.0 | 1.33 | NaN | NaN | … | 685.0 | 511.0 | 424.0 | 1884 | 1000 | 5.0 | 7646 | 56.0 | 19.0 | 7.3 |

| 1601 | Wolf Creek | NaN | Drama|Horror|Thriller | English | Australia | NaN | NaN | 2.00 | NaN | NaN | … | 511.0 | 457.0 | 206.0 | 1617 | 954 | 0.0 | 726 | 6.0 | 2.0 | 7.1 |

| 1602 | Wuthering Heights | NaN | Drama|Romance | English | UK | NaN | 142.0 | NaN | NaN | NaN | … | 27000.0 | 698.0 | 427.0 | 29196 | 0 | 2.0 | 6053 | 33.0 | 9.0 | 7.7 |

| 1603 | Yu-Gi-Oh! Duel Monsters | NaN | Action|Adventure|Animation|Family|Fantasy | Japanese | Japan | NaN | 24.0 | NaN | NaN | NaN | … | 0.0 | NaN | NaN | 0 | 124 | 0.0 | 12417 | 51.0 | 6.0 | 7.0 |

5 rows × 25 columns

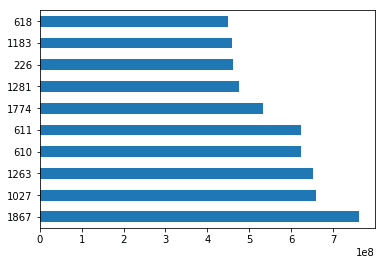

In Excel, you’re able to sort a sheet based on the values in one or more columns. In pandas, you can do the same thing with the sort_values method. For example, let’s sort our movies DataFrame based on the Gross Earnings column.

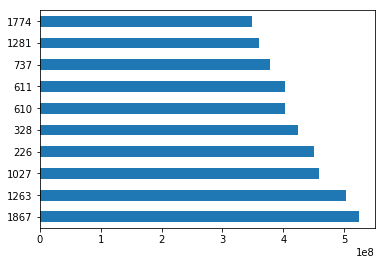

sorted_by_gross = movies.sort_values(['Gross Earnings'], ascending=False)Since we have the data sorted by values in a column, we can do few interesting things with it. For example, we can display the top 10 movies by Gross Earnings.

sorted_by_gross["Gross Earnings"].head(10)1867 760505847.0

1027 658672302.0

1263 652177271.0

610 623279547.0

611 623279547.0

1774 533316061.0

1281 474544677.0

226 460935665.0

1183 458991599.0

618 448130642.0

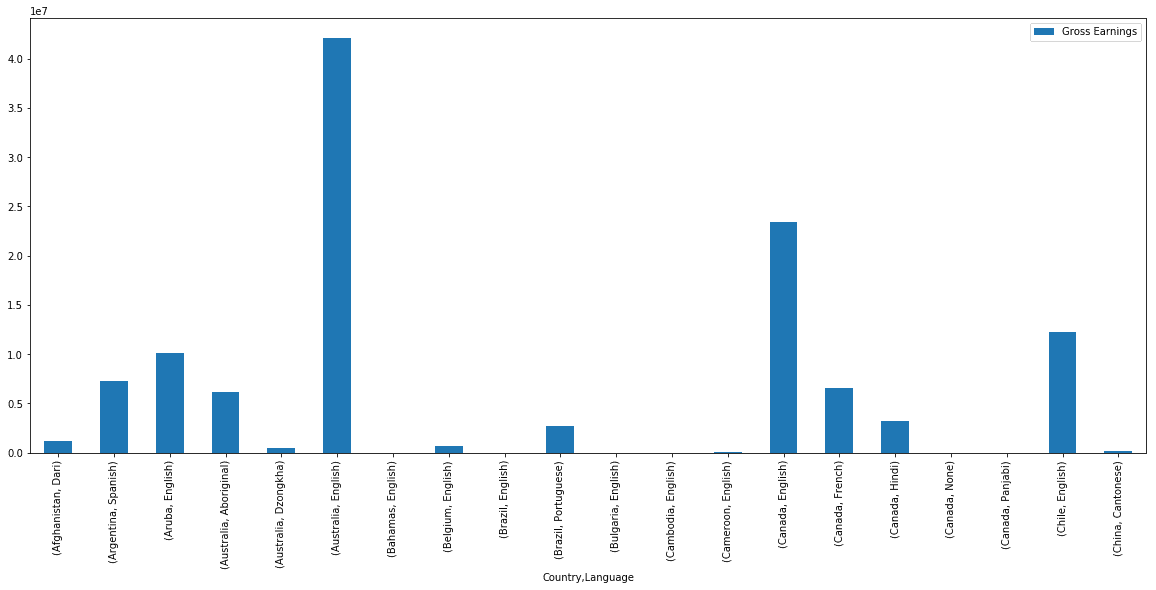

Name: Gross Earnings, dtype: float64We can also create a plot for the top 10 movies by Gross Earnings. Pandas makes it easy to visualize your data with plots and charts through matplotlib, a popular data visualization library. With a couple lines of code, you can start plotting. Moreover, matplotlib plots work well inside Jupyter Notebooks since you can displace the plots right under the code.

First, we import the matplotlib module and set matplotlib to display the plots right in the Jupyter Notebook.

import matplotlib.pyplot as plt%matplotlib inlineWe will draw a bar plot where each bar will represent one of the top 10 movies. We can do this by calling the plot method and setting the argument kind to barh. This tells matplotlib to draw a horizontal bar plot.

sorted_by_gross['Gross Earnings'].head(10).plot(kind="barh")

plt.show()Let’s create a histogram of IMDB Scores to check the distribution of IMDB Scores across all movies. Histograms are a good way to visualize the distribution of a data set. We use the plot method on the IMDB Scores series from our movies DataFrame and pass it the argument.

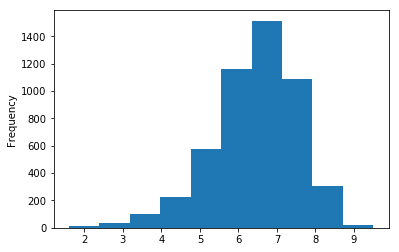

movies['IMDB Score'].plot(kind="hist")

plt.show()This data visualization suggests that most of the IMDB Scores fall between six and eight.

Getting statistical information about the data

Pandas has some very handy methods to look at the statistical data about our data set. For example, we can use the describe method to get a statistical summary of the data set.

movies.describe()| Year | Duration | Aspect Ratio | Budget | Gross Earnings | Facebook Likes — Director | Facebook Likes — Actor 1 | Facebook Likes — Actor 2 | Facebook Likes — Actor 3 | Facebook Likes — cast Total | Facebook likes — Movie | Facenumber in posters | User Votes | Reviews by Users | Reviews by Crtiics | IMDB Score | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| count | 4935.000000 | 5028.000000 | 4714.000000 | 4.551000e+03 | 4.159000e+03 | 4938.000000 | 5035.000000 | 5029.000000 | 5020.000000 | 5042.000000 | 5042.000000 | 5029.000000 | 5.042000e+03 | 5022.000000 | 4993.000000 | 5042.000000 |

| mean | 2002.470517 | 107.201074 | 2.220403 | 3.975262e+07 | 4.846841e+07 | 686.621709 | 6561.323932 | 1652.080533 | 645.009761 | 9700.959143 | 7527.457160 | 1.371446 | 8.368475e+04 | 272.770808 | 140.194272 | 6.442007 |

| std | 12.474599 | 25.197441 | 1.385113 | 2.061149e+08 | 6.845299e+07 | 2813.602405 | 15021.977635 | 4042.774685 | 1665.041728 | 18165.101925 | 19322.070537 | 2.013683 | 1.384940e+05 | 377.982886 | 121.601675 | 1.125189 |

| min | 1916.000000 | 7.000000 | 1.180000 | 2.180000e+02 | 1.620000e+02 | 0.000000 | 0.000000 | 0.000000 | 0.000000 | 0.000000 | 0.000000 | 0.000000 | 5.000000e+00 | 1.000000 | 1.000000 | 1.600000 |

| 25% | 1999.000000 | 93.000000 | 1.850000 | 6.000000e+06 | 5.340988e+06 | 7.000000 | 614.500000 | 281.000000 | 133.000000 | 1411.250000 | 0.000000 | 0.000000 | 8.599250e+03 | 65.000000 | 50.000000 | 5.800000 |

| 50% | 2005.000000 | 103.000000 | 2.350000 | 2.000000e+07 | 2.551750e+07 | 49.000000 | 988.000000 | 595.000000 | 371.500000 | 3091.000000 | 166.000000 | 1.000000 | 3.437100e+04 | 156.000000 | 110.000000 | 6.600000 |

| 75% | 2011.000000 | 118.000000 | 2.350000 | 4.500000e+07 | 6.230944e+07 | 194.750000 | 11000.000000 | 918.000000 | 636.000000 | 13758.750000 | 3000.000000 | 2.000000 | 9.634700e+04 | 326.000000 | 195.000000 | 7.200000 |

| max | 2016.000000 | 511.000000 | 16.000000 | 1.221550e+10 | 7.605058e+08 | 23000.000000 | 640000.000000 | 137000.000000 | 23000.000000 | 656730.000000 | 349000.000000 | 43.000000 | 1.689764e+06 | 5060.000000 | 813.000000 | 9.500000 |

The describe method displays below information for each of the columns.

- the count or number of values

- mean

- standard deviation

- minimum, maximum

- 25%, 50%, and 75% quantile

Please note that this information will be calculated only for the numeric values.

We can also use the corresponding method to access this information one at a time. For example, to get the mean of a particular column, you can use the mean method on that column.

movies["Gross Earnings"].mean()48468407.526809327Just like mean, there are methods available for each of the statistical information we want to access. You can read about these methods in our free pandas cheat sheet.

Reading files with no header and skipping records

Earlier in this tutorial, we saw some ways to read a particular kind of Excel file that had headers and no rows that needed skipping. Sometimes, the Excel sheet doesn’t have any header row. For such instances, you can tell pandas not to consider the first row as header or columns names. And If the Excel sheet’s first few rows contain data that should not be read in, you can ask the read_excel method to skip a certain number of rows, starting from the top.

For example, look at the top few rows of this Excel file.

This file obviously has no header and first four rows are not actual records and hence should not be read in. We can tell read_excel there is no header by setting argument header to None and we can skip first four rows by setting argument skiprows to four.

movies_skip_rows = pd.read_excel("movies-no-header-skip-rows.xls", header=None, skiprows=4)

movies_skip_rows.head(5)| 0 | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | … | 15 | 16 | 17 | 18 | 19 | 20 | 21 | 22 | 23 | 24 | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | Metropolis | 1927 | Drama|Sci-Fi | German | Germany | Not Rated | 145 | 1.33 | 6000000.0 | 26435.0 | … | 136 | 23 | 18.0 | 203 | 12000 | 1 | 111841 | 413 | 260.0 | 8.3 |

| 1 | Pandora’s Box | 1929 | Crime|Drama|Romance | German | Germany | Not Rated | 110 | 1.33 | NaN | 9950.0 | … | 426 | 20 | 3.0 | 455 | 926 | 1 | 7431 | 84 | 71.0 | 8.0 |

| 2 | The Broadway Melody | 1929 | Musical|Romance | English | USA | Passed | 100 | 1.37 | 379000.0 | 2808000.0 | … | 77 | 28 | 4.0 | 109 | 167 | 8 | 4546 | 71 | 36.0 | 6.3 |

| 3 | Hell’s Angels | 1930 | Drama|War | English | USA | Passed | 96 | 1.20 | 3950000.0 | NaN | … | 431 | 12 | 4.0 | 457 | 279 | 1 | 3753 | 53 | 35.0 | 7.8 |

| 4 | A Farewell to Arms | 1932 | Drama|Romance|War | English | USA | Unrated | 79 | 1.37 | 800000.0 | NaN | … | 998 | 164 | 99.0 | 1284 | 213 | 1 | 3519 | 46 | 42.0 | 6.6 |

5 rows × 25 columns

We skipped four rows from the sheet and used none of the rows as the header. Also, notice that one can combine different options in a single read statement. To skip rows at the bottom of the sheet, you can use option skip_footer, which works just like skiprows, the only difference being the rows are counted from the bottom upwards.

The column names in the previous DataFrame are numeric and were allotted as default by the pandas. We can rename the column names to descriptive ones by calling the method columns on the DataFrame and passing the column names as a list.

movies_skip_rows.columns = ['Title', 'Year', 'Genres', 'Language', 'Country', 'Content Rating', 'Duration', 'Aspect Ratio', 'Budget', 'Gross Earnings', 'Director', 'Actor 1', 'Actor 2', 'Actor 3', 'Facebook Likes - Director', 'Facebook Likes - Actor 1', 'Facebook Likes - Actor 2', 'Facebook Likes - Actor 3', 'Facebook Likes - cast Total', 'Facebook likes - Movie', 'Facenumber in posters', 'User Votes', 'Reviews by Users', 'Reviews by Crtiics', 'IMDB Score']

movies_skip_rows.head()| Title | Year | Genres | Language | Country | Content Rating | Duration | Aspect Ratio | Budget | Gross Earnings | … | Facebook Likes — Actor 1 | Facebook Likes — Actor 2 | Facebook Likes — Actor 3 | Facebook Likes — cast Total | Facebook likes — Movie | Facenumber in posters | User Votes | Reviews by Users | Reviews by Crtiics | IMDB Score | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | Metropolis | 1927 | Drama|Sci-Fi | German | Germany | Not Rated | 145 | 1.33 | 6000000.0 | 26435.0 | … | 136 | 23 | 18.0 | 203 | 12000 | 1 | 111841 | 413 | 260.0 | 8.3 |

| 1 | Pandora’s Box | 1929 | Crime|Drama|Romance | German | Germany | Not Rated | 110 | 1.33 | NaN | 9950.0 | … | 426 | 20 | 3.0 | 455 | 926 | 1 | 7431 | 84 | 71.0 | 8.0 |

| 2 | The Broadway Melody | 1929 | Musical|Romance | English | USA | Passed | 100 | 1.37 | 379000.0 | 2808000.0 | … | 77 | 28 | 4.0 | 109 | 167 | 8 | 4546 | 71 | 36.0 | 6.3 |

| 3 | Hell’s Angels | 1930 | Drama|War | English | USA | Passed | 96 | 1.20 | 3950000.0 | NaN | … | 431 | 12 | 4.0 | 457 | 279 | 1 | 3753 | 53 | 35.0 | 7.8 |

| 4 | A Farewell to Arms | 1932 | Drama|Romance|War | English | USA | Unrated | 79 | 1.37 | 800000.0 | NaN | … | 998 | 164 | 99.0 | 1284 | 213 | 1 | 3519 | 46 | 42.0 | 6.6 |

5 rows × 25 columns

Now that we have seen how to read a subset of rows from the Excel file, we can learn how to read a subset of columns.

Reading a subset of columns

Although read_excel defaults to reading and importing all columns, you can choose to import only certain columns. By passing parse_cols=6, we are telling the read_excel method to read only the first columns till index six or first seven columns (the first column being indexed zero).

movies_subset_columns = pd.read_excel(excel_file, parse_cols=6)

movies_subset_columns.head()| Title | Year | Genres | Language | Country | Content Rating | Duration | |

|---|---|---|---|---|---|---|---|

| 0 | Intolerance: Love’s Struggle Throughout the Ages | 1916 | Drama|History|War | NaN | USA | Not Rated | 123 |

| 1 | Over the Hill to the Poorhouse | 1920 | Crime|Drama | NaN | USA | NaN | 110 |

| 2 | The Big Parade | 1925 | Drama|Romance|War | NaN | USA | Not Rated | 151 |

| 3 | Metropolis | 1927 | Drama|Sci-Fi | German | Germany | Not Rated | 145 |

| 4 | Pandora’s Box | 1929 | Crime|Drama|Romance | German | Germany | Not Rated | 110 |

Alternatively, you can pass in a list of numbers, which will let you import columns at particular indexes.

Applying formulas on the columns

One of the much-used features of Excel is to apply formulas to create new columns from existing column values. In our Excel file, we have Gross Earnings and Budget columns. We can get Net earnings by subtracting Budget from Gross earnings. We could then apply this formula in the Excel file to all the rows. We can do this in pandas also as shown below.

movies["Net Earnings"] = movies["Gross Earnings"] - movies["Budget"]Above, we used pandas to create a new column called Net Earnings, and populated it with the difference of Gross Earnings and Budget. It’s worth noting the difference here in how formulas are treated in Excel versus pandas. In Excel, a formula lives in the cell and updates when the data changes — with Python, the calculations happen and the values are stored — if Gross Earnings for one movie was manually changed, Net Earnings won’t be updated.

Let’s use the sort_values method to sort the data by the new column we created and visualize the top 10 movies by Net Earnings.

sorted_movies = movies[['Net Earnings']].sort_values(['Net Earnings'], ascending=[False])sorted_movies.head(10)['Net Earnings'].plot.barh()

plt.show()Pivot Table in pandas

Advanced Excel users also often use pivot tables. A pivot table summarizes the data of another table by grouping the data on an index and applying operations such as sorting, summing, or averaging. You can use this feature in pandas too.

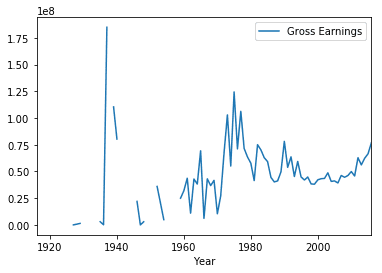

We need to first identify the column or columns that will serve as the index, and the column(s) on which the summarizing formula will be applied. Let’s start small, by choosing Year as the index column and Gross Earnings as the summarization column and creating a separate DataFrame from this data.

movies_subset = movies[['Year', 'Gross Earnings']]

movies_subset.head()| Year | Gross Earnings | |

|---|---|---|

| 0 | 1916.0 | NaN |

| 1 | 1920.0 | 3000000.0 |

| 2 | 1925.0 | NaN |

| 3 | 1927.0 | 26435.0 |

| 4 | 1929.0 | 9950.0 |

We now call pivot_table on this subset of data. The method pivot_table takes a parameter index. As mentioned, we want to use Year as the index.

earnings_by_year = movies_subset.pivot_table(index=['Year'])

earnings_by_year.head()| Gross Earnings | |

|---|---|

| Year | |

| 1916.0 | NaN |

| 1920.0 | 3000000.0 |

| 1925.0 | NaN |

| 1927.0 | 26435.0 |

| 1929.0 | 1408975.0 |

This gave us a pivot table with grouping on Year and summarization on the sum of Gross Earnings. Notice, we didn’t need to specify Gross Earnings column explicitly as pandas automatically identified it the values on which summarization should be applied.

We can use this pivot table to create some data visualizations. We can call the plot method on the DataFrame to create a line plot and call the show method to display the plot in the notebook.

earnings_by_year.plot()

plt.show()We saw how to pivot with a single column as the index. Things will get more interesting if we can use multiple columns. Let’s create another DataFrame subset but this time we will choose the columns, Country, Language and Gross Earnings.

movies_subset = movies[['Country', 'Language', 'Gross Earnings']]

movies_subset.head()| Country | Language | Gross Earnings | |

|---|---|---|---|

| 0 | USA | NaN | NaN |

| 1 | USA | NaN | 3000000.0 |

| 2 | USA | NaN | NaN |

| 3 | Germany | German | 26435.0 |

| 4 | Germany | German | 9950.0 |

We will use columns Country and Language as the index for the pivot table. We will use Gross Earnings as summarization table, however, we do not need to specify this explicitly as we saw earlier.

earnings_by_co_lang = movies_subset.pivot_table(index=['Country', 'Language'])

earnings_by_co_lang.head()| Gross Earnings | ||

|---|---|---|

| Country | Language | |

| Afghanistan | Dari | 1.127331e+06 |

| Argentina | Spanish | 7.230936e+06 |

| Aruba | English | 1.007614e+07 |

| Australia | Aboriginal | 6.165429e+06 |

| Dzongkha | 5.052950e+05 |

Let’s visualize this pivot table with a bar plot. Since there are still few hundred records in this pivot table, we will plot just a few of them.

earnings_by_co_lang.head(20).plot(kind='bar', figsize=(20,8))

plt.show()Exporting the results to Excel

If you’re going to be working with colleagues who use Excel, saving Excel files out of pandas is important. You can export or write a pandas DataFrame to an Excel file using pandas to_excel method. Pandas uses the xlwt Python module internally for writing to Excel files. The to_excel method is called on the DataFrame we want to export.We also need to pass a filename to which this DataFrame will be written.

movies.to_excel('output.xlsx')By default, the index is also saved to the output file. However, sometimes the index doesn’t provide any useful information. For example, the movies DataFrame has a numeric auto-increment index, that was not part of the original Excel data.

movies.head()| Title | Year | Genres | Language | Country | Content Rating | Duration | Aspect Ratio | Budget | Gross Earnings | … | Facebook Likes — Actor 2 | Facebook Likes — Actor 3 | Facebook Likes — cast Total | Facebook likes — Movie | Facenumber in posters | User Votes | Reviews by Users | Reviews by Crtiics | IMDB Score | Net Earnings | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | Intolerance: Love’s Struggle Throughout the Ages | 1916.0 | Drama|History|War | NaN | USA | Not Rated | 123.0 | 1.33 | 385907.0 | NaN | … | 22.0 | 9.0 | 481 | 691 | 1.0 | 10718 | 88.0 | 69.0 | 8.0 | NaN |

| 1 | Over the Hill to the Poorhouse | 1920.0 | Crime|Drama | NaN | USA | NaN | 110.0 | 1.33 | 100000.0 | 3000000.0 | … | 2.0 | 0.0 | 4 | 0 | 1.0 | 5 | 1.0 | 1.0 | 4.8 | 2900000.0 |

| 2 | The Big Parade | 1925.0 | Drama|Romance|War | NaN | USA | Not Rated | 151.0 | 1.33 | 245000.0 | NaN | … | 12.0 | 6.0 | 108 | 226 | 0.0 | 4849 | 45.0 | 48.0 | 8.3 | NaN |

| 3 | Metropolis | 1927.0 | Drama|Sci-Fi | German | Germany | Not Rated | 145.0 | 1.33 | 6000000.0 | 26435.0 | … | 23.0 | 18.0 | 203 | 12000 | 1.0 | 111841 | 413.0 | 260.0 | 8.3 | -5973565.0 |

| 4 | Pandora’s Box | 1929.0 | Crime|Drama|Romance | German | Germany | Not Rated | 110.0 | 1.33 | NaN | 9950.0 | … | 20.0 | 3.0 | 455 | 926 | 1.0 | 7431 | 84.0 | 71.0 | 8.0 | NaN |

5 rows × 26 columns

You can choose to skip the index by passing along index-False.

movies.to_excel('output.xlsx', index=False)We need to be able to make our output files look nice before we can send it out to our co-workers. We can use pandas ExcelWriter class along with the XlsxWriter Python module to apply the formatting.

We can do use these advanced output options by creating a ExcelWriter object and use this object to write to the EXcel file.

writer = pd.ExcelWriter('output.xlsx', engine='xlsxwriter')

movies.to_excel(writer, index=False, sheet_name='report')

workbook = writer.bookworksheet = writer.sheets['report']We can apply customizations by calling add_format on the workbook we are writing to. Here we are setting header format as bold.

header_fmt = workbook.add_format({'bold': True})

worksheet.set_row(0, None, header_fmt)Finally, we save the output file by calling the method save on the writer object.

writer.save()As an example, we saved the data with column headers set as bold. And the saved file looks like the image below.

Like this, one can use XlsxWriter to apply various formatting to the output Excel file.

Conclusion

Pandas is not a replacement for Excel. Both tools have their place in the data analysis workflow and can be very great companion tools. As we demonstrated, pandas can do a lot of complex data analysis and manipulations, which depending on your need and expertise, can go beyond what you can achieve if you are just using Excel. One of the major benefits of using Python and pandas over Excel is that it helps you automate Excel file processing by writing scripts and integrating with your automated data workflow. Pandas also has excellent methods for reading all kinds of data from Excel files. You can export your results from pandas back to Excel too if that’s preferred by your intended audience.

On the other hand, Excel is a such a widely used data tool, it’s not a wise to ignore it. Acquiring expertise in both pandas and Excel and making them work together gives you skills that can help you stand out in your organization.

If you’d like to learn more about this topic, check out Dataquest’s interactive Pandas and NumPy Fundamentals course, and our Data Analyst in Python, and Data Scientist in Python paths that will help you become job-ready in around 6 months.